42 pytorch dataloader without labels

github.com › pytorch › pytorchAttributeError: 'NoneType' object has no attribute '_free ... I am facing the same issue while training my YOLOV5 model. Exception ignored in: Traceback (most recent call last): File "miniconda3\envs\yo1\lib\site-packages\torch\multiprocessing\reductions.py", line 36, in __del__ File "miniconda3\envs\yo1\lib\site-packages\torch\storage.py", line 520, in _free_weak_ref AttributeError: 'NoneType ... debuggercafe.com › custom-object-detection-usingCustom Object Detection using PyTorch Faster RCNN Oct 25, 2021 · For the PyTorch framework, it will be best if you have the latest version, that is PyTorch version 1.9.0 at the time of writing this tutorial. If a new version is out there while you are reading, feel free to install that. You also need the Albumentations library for image augmentations. This provides very easy methods to apply data ...

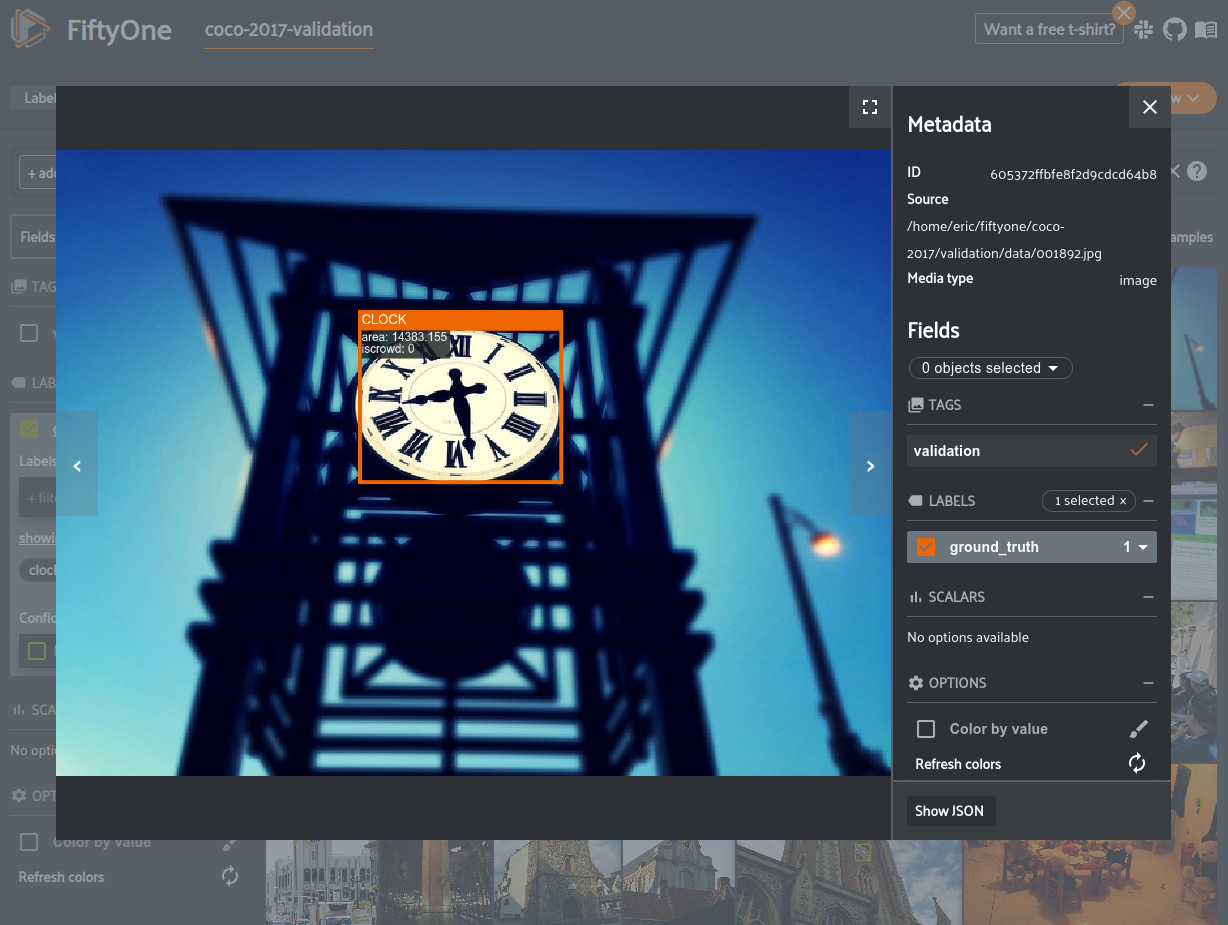

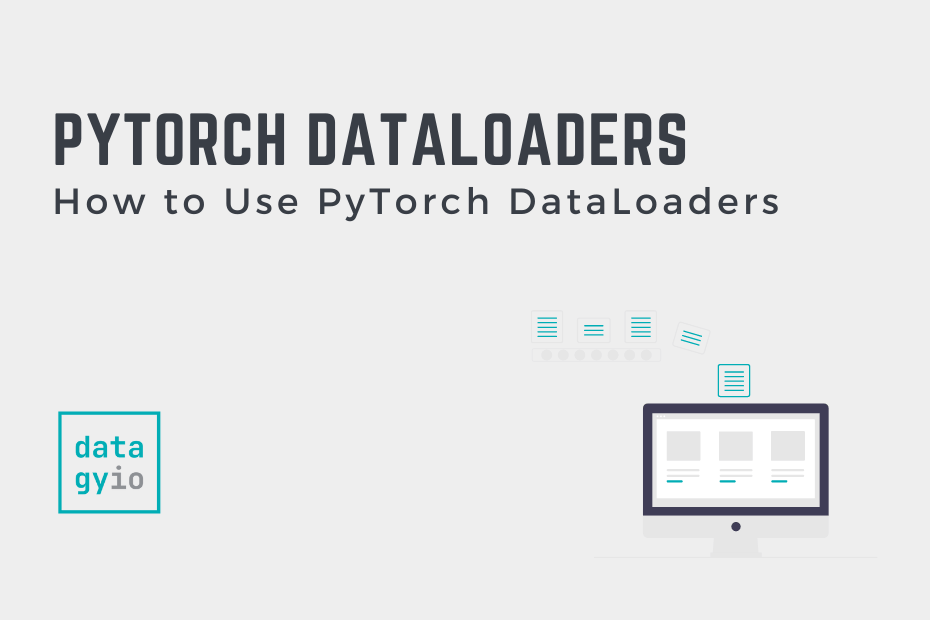

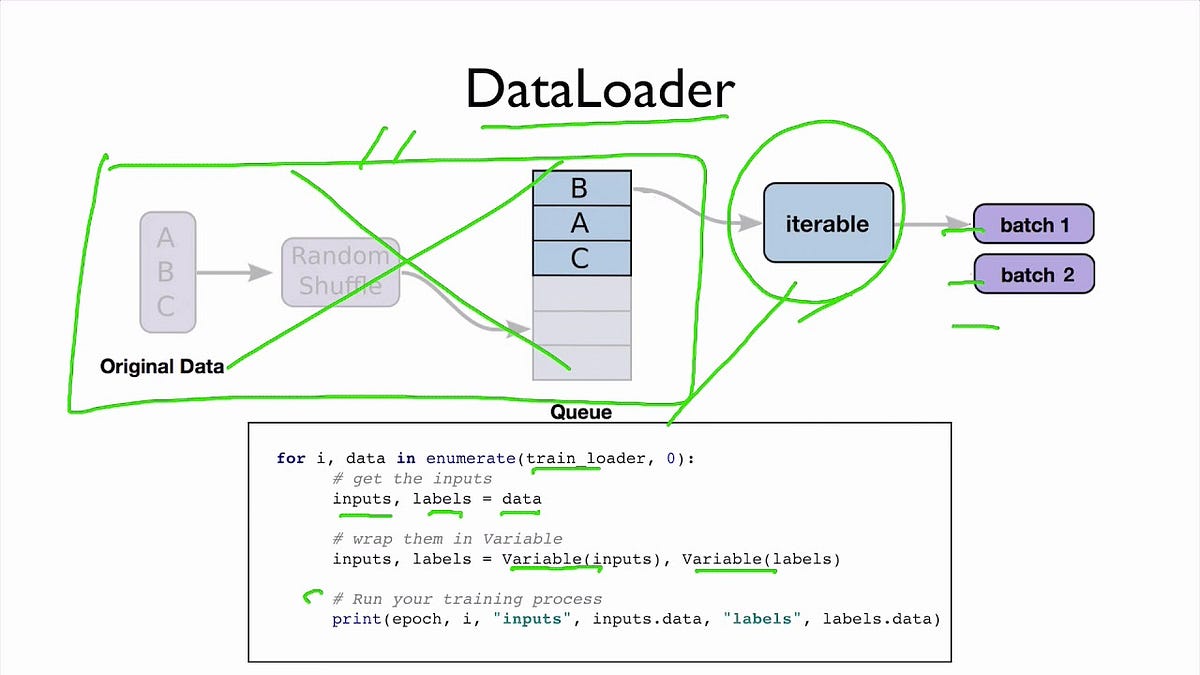

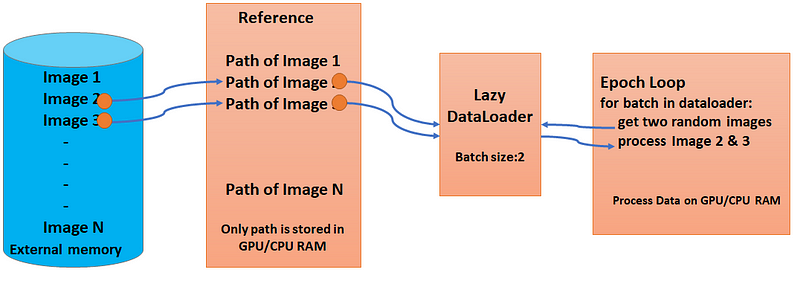

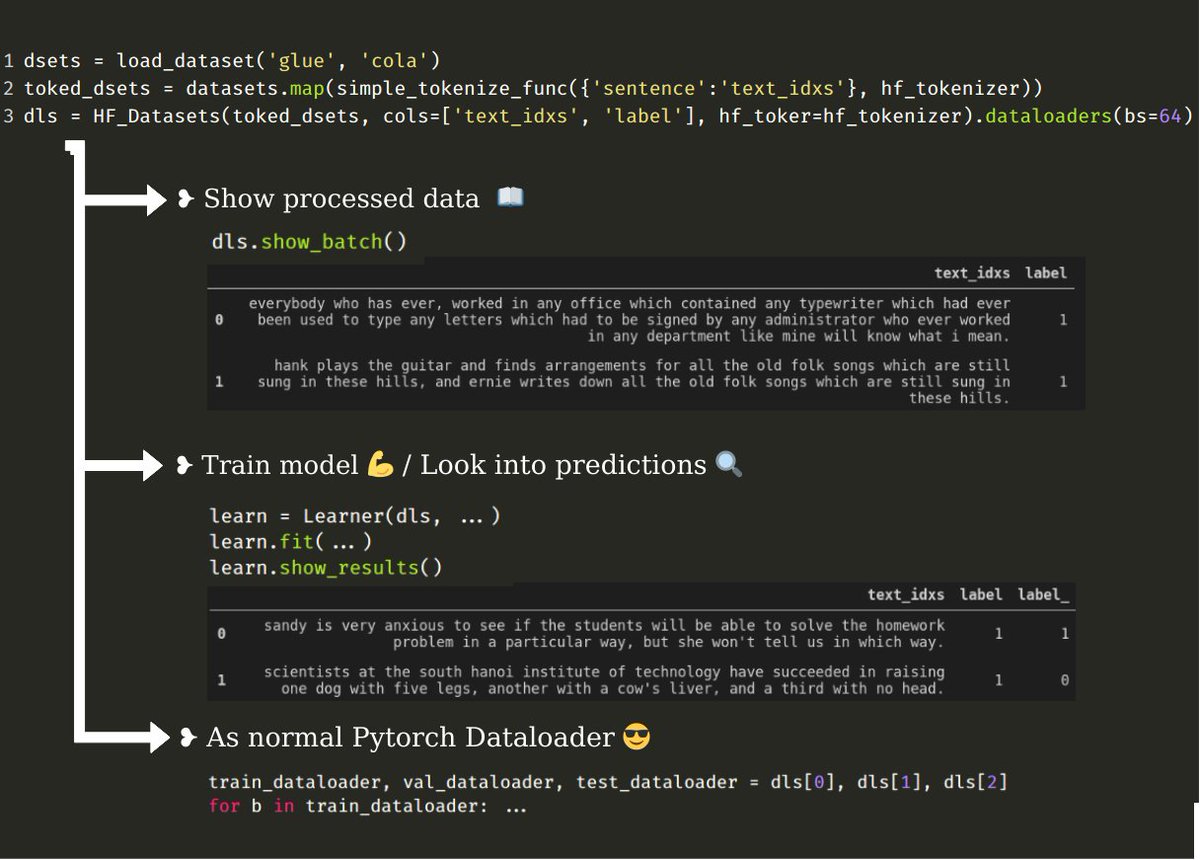

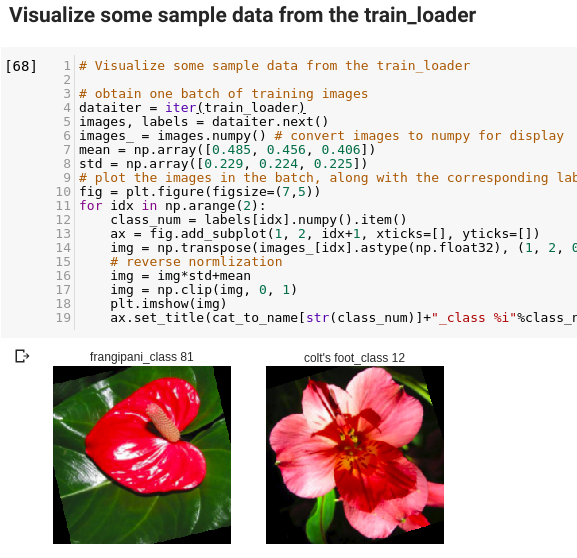

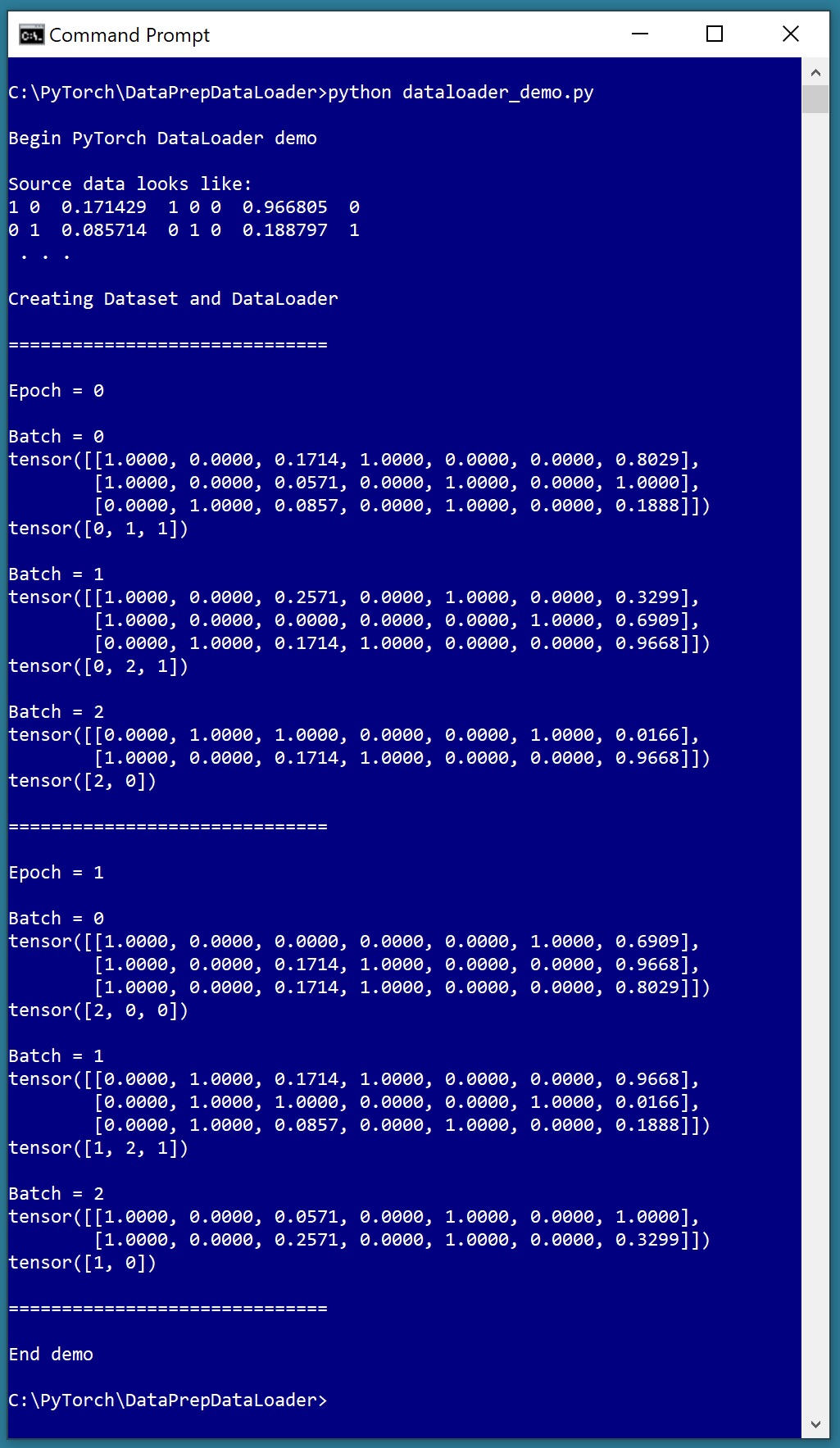

pyimagesearch.com › image-data-loaders-in-pytorchImage Data Loaders in PyTorch - PyImageSearch Oct 04, 2021 · A PyTorch Dataset provides functionalities to load and store our data samples with the corresponding labels. In addition to this, PyTorch also has an in-built DataLoader class which wraps an iterable around the dataset enabling us to easily access and iterate over the data samples in our dataset.

Pytorch dataloader without labels

github.com › pytorch › pytorchpossible deadlock in dataloader · Issue #1355 · pytorch ... Apr 25, 2017 · This is with PyTorch 1.10.0 / CUDA 11.3 and PyTorch 1.8.1 / CUDA 10.2. Essentially what happens is at the start of training there are 3 processes when doing DDP with 0 workers and 1 GPU. When the hang happens, the main training process gets stuck on iterating over the dataloader and goes to 0% CPU usage. The other two processes are at 100% CPU. How to load a list of numpy arrays to pytorch dataset loader? 08.06.2017 · I think what DataLoader actually requires is an input that subclasses Dataset.You can either write your own dataset class that subclasses Datasetor use TensorDataset as I have done below: . import torch import numpy as np from torch.utils.data import TensorDataset, DataLoader my_x = [np.array([[1.0,2],[3,4]]),np.array([[5.,6],[7,8]])] # a list of numpy arrays … zhuanlan.zhihu.com › p › 270028097PyTorch源码解析与实践(1):数据加载Dataset,Sampler与DataLoader ... 希望在代码层面更加透彻地理解和使用PyTorch(初步计划,后期可能会有改动) 另:疏漏之处欢迎大佬指正,欢迎交流~ 我们开始吧。 1 源码解析. PyTorch的数据加载模块,一共涉及到Dataset,Sampler,Dataloader三个类

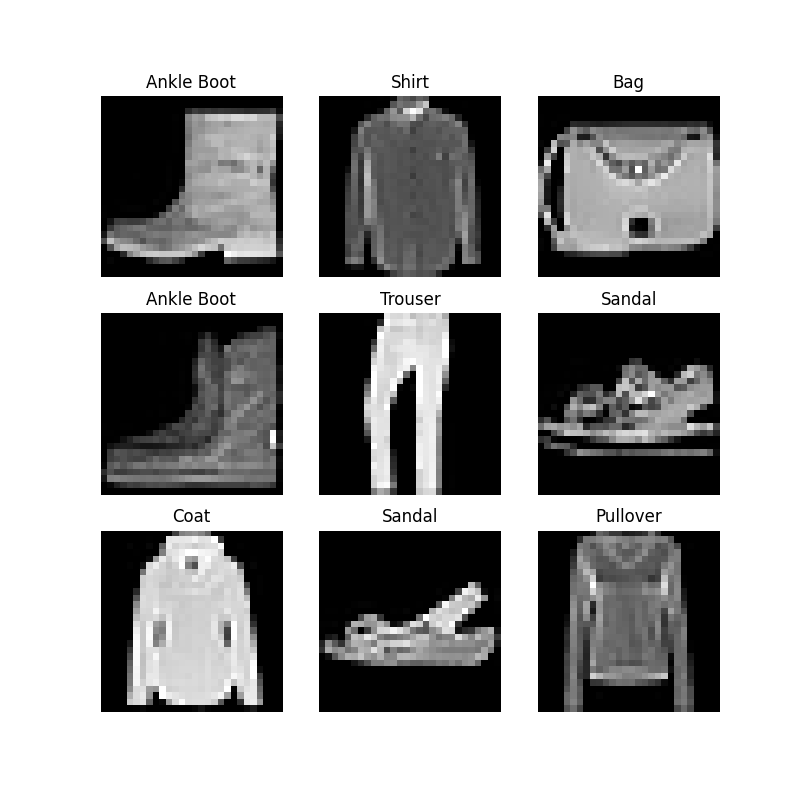

Pytorch dataloader without labels. pytorch.org › docs › stabletorch.utils.tensorboard — PyTorch 1.12 documentation Learn about PyTorch’s features and capabilities. Community. Join the PyTorch developer community to contribute, learn, and get your questions answered. Developer Resources. Find resources and get questions answered. Forums. A place to discuss PyTorch code, issues, install, research. Models (Beta) Discover, publish, and reuse pre-trained models › pytorch-mnistPyTorch MNIST | Complete Guide on PyTorch MNIST - EDUCBA Using PyTorch on MNIST Dataset. It is easy to use PyTorch in MNIST dataset for all the neural networks. DataLoader module is needed with which we can implement a neural network, and we can see the input and hidden layers. Activation functions need to be applied with loss and optimizer functions so that we can implement the training loop. zhuanlan.zhihu.com › p › 270028097PyTorch源码解析与实践(1):数据加载Dataset,Sampler与DataLoader ... 希望在代码层面更加透彻地理解和使用PyTorch(初步计划,后期可能会有改动) 另:疏漏之处欢迎大佬指正,欢迎交流~ 我们开始吧。 1 源码解析. PyTorch的数据加载模块,一共涉及到Dataset,Sampler,Dataloader三个类 How to load a list of numpy arrays to pytorch dataset loader? 08.06.2017 · I think what DataLoader actually requires is an input that subclasses Dataset.You can either write your own dataset class that subclasses Datasetor use TensorDataset as I have done below: . import torch import numpy as np from torch.utils.data import TensorDataset, DataLoader my_x = [np.array([[1.0,2],[3,4]]),np.array([[5.,6],[7,8]])] # a list of numpy arrays …

github.com › pytorch › pytorchpossible deadlock in dataloader · Issue #1355 · pytorch ... Apr 25, 2017 · This is with PyTorch 1.10.0 / CUDA 11.3 and PyTorch 1.8.1 / CUDA 10.2. Essentially what happens is at the start of training there are 3 processes when doing DDP with 0 workers and 1 GPU. When the hang happens, the main training process gets stuck on iterating over the dataloader and goes to 0% CPU usage. The other two processes are at 100% CPU.

![PDF] PyTorch Metric Learning | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/1e0b3982e7f9ceeeb7fe676d8791e240700ca3da/1-Figure1-1.png)

Post a Comment for "42 pytorch dataloader without labels"